Written by Nando

on

on

Madrid Devops 09/2017: Chaos Engineering with Adrian Cockroft

Below are the notes I took during the event. The talk was pretty good. Full of details and interesting facts about this new practice of Chaos Engineering, born from the experiences at Netflix. The presentation material was still a WIP, only a couple of weeks old. Which means that we were honored to be among the first humans who watched it.

Datacenter to cloud at Netflix

- Percentage-based a/b testing, by customer id

- Find a path: Oracle ~> Cassandra ~> DynamoDB

- Remote replication instead of SSD mirroring can be more resilient.

- Your infrastructure vs. a simian army:

- Chaos gorilla: kill a whole region every month

- Chaos Kong: divert traffic to a different region every month

- Blog post

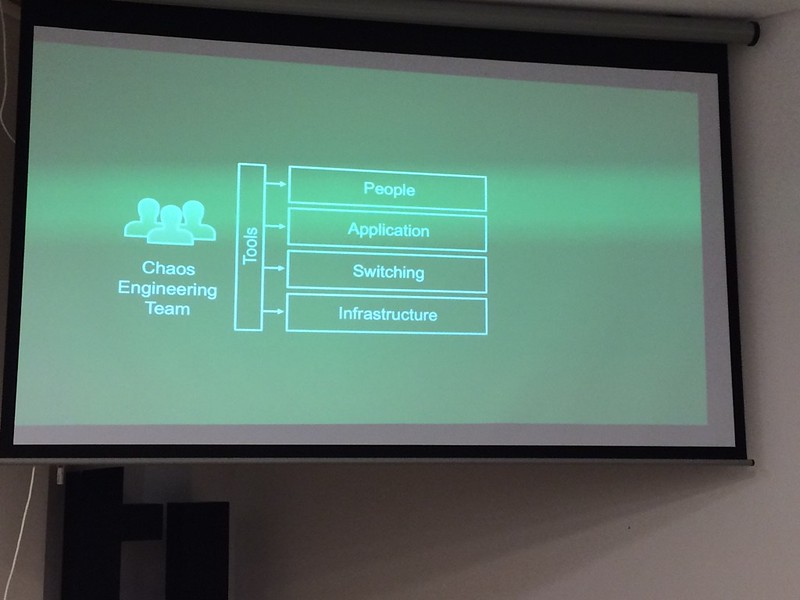

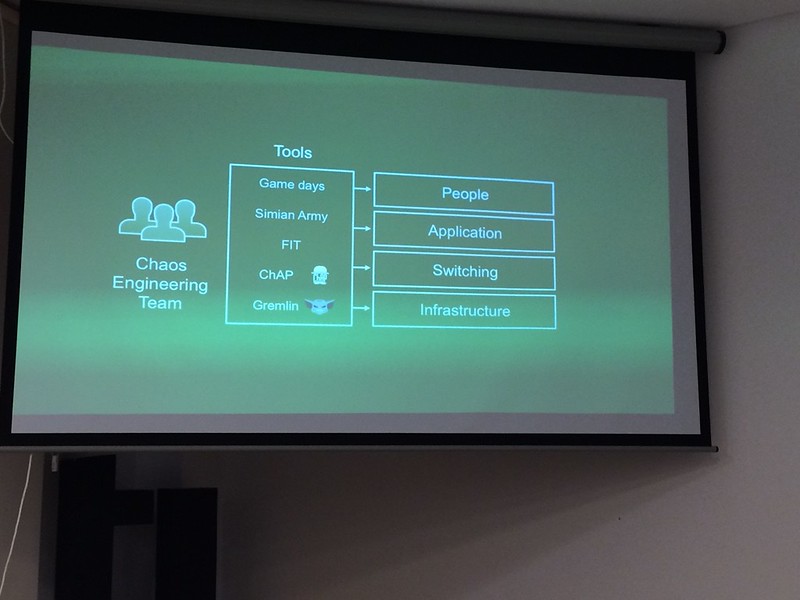

- Chaos engineering team: work a cloud-native availability model

- No SPOF!

- AWS has no shared resources inbetween regions

- Regional level of stickiness per account

- Reroute/switch customers on outages and back

- Errors are the least well tested parts of your application

- Applications: error returns, slow responses, network partitions

- Test to fail

- Microservices minimize permutations for testing

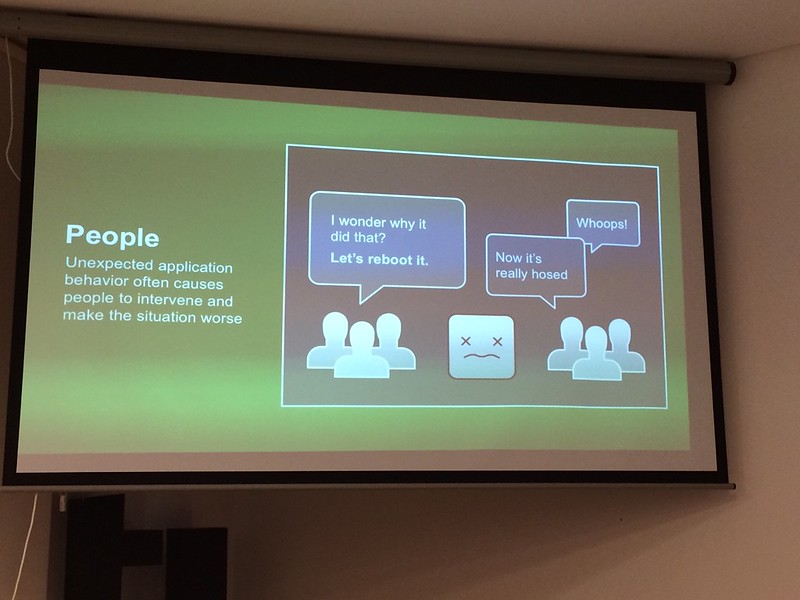

- When applications behave inconsistently, people break them

- Fire drill for IT ~> be on an incident call (Slack, PD, statuspage)

- Release your tools as FLOSS

- Amazon is building an open source program, based on NetflixOSS

Chaos Engineering at Netflix

- Derived from the experiences above

- Failure injection testing (FIT)

- Gremlin: Network-level failure (block ports, …)

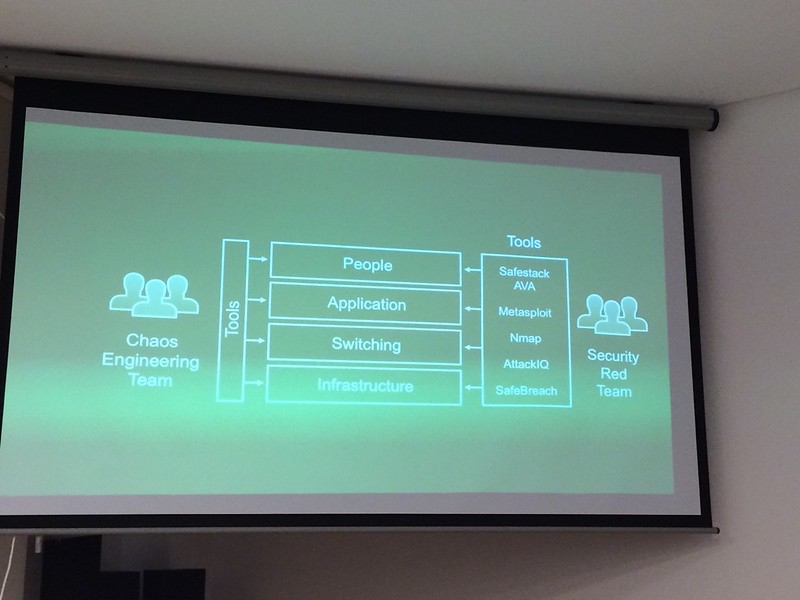

- Red teams: Chaos Engineering team

- Blue team: SRE team

- Break it to make it better

- Chaos manifestos:

- Measure the 99.9% availability through all four layers

- The weakest link is the people

- O’Reilly ops free ebooks

- Main site with lots of references

- Chaos Engineering

Q&A

- Existing projects will break

- Fight the existing Entropy before you can fight Chaos

- Greenfield projects: start with chaos monkey, chaos failover and more tools.

- The app goes to production if it passes the failover: extreme QA

- Apps: no persistence: only retry policies and HA caches (state is its own layer)

- Pain points

- What people are used to (usaurios)

- You can’t really work out the CAP theorem (revisited)

- Garbage collection === Network outage

- “The Network Is Reliable” paper

- Michael Nygard’s book: “Release it”

- Performance team relocates engineers/work to fix inefficient parts of the system

- SRE teams measure availability and rapid response to incidents

- Chaos mitigation: graceful failure absorption and capacity failure

- This is really an old concept: “Improve the security of your system by breaking into it”

Follow @MadridDevops on Twitter Link to the video (Youtube)